How to identify the criteria impacting a consumer in his product or service choice.

The basics

MaxDiff (Maximum Difference Scaling) is an approach which allows us to evaluate preferences and to classify a large range of alternative proposals in relation to one another.

Unlike the CBC, this methodology uses only one attribute (the list of alternatives) and the respondent has to choose among a series of subsets.

This measurement is recorded as an individual score directly associated with each proposal and for each respondent. As a result, this score will make it possible to define the classification and the weight of importance given to each alternative.

Advantages of a MaxDiff:

- It classifies a large number of proposals without evaluating all of them at once: choice tasks are repeated with only a few components from the initial list each

- It obtains scores rather than a simple ranking value, allowing us to also measure the distance between two alternatives

- It simplifies the respondents answers: they choose the ‘most preferred’ and ‘least preferred’ in each subset

The experimental design

Solirem develops the experimental design according to the number of products to be assessed.

As with any conjoint analysis, the design is constructed to be orthogonal, that is to say, all alternatives have the same chance of being seen.

During this stage of the development, the design is tested, based on a defined sample size, to determine the optimum number of choice tasks and the number of its proposals.

Collecting information

The approach presents a candidate with a repetition of proposals presented in ‘sets’ (3 to 6 in general) within all the proposals being evaluated. The respondent is then asked to choose the “best” and “worst” (or only “best”) in this ‘set’.

The question is repeated on X number of screens or with X number of choice tasks, each time putting forward a distinct ‘set’ of ‘items’.

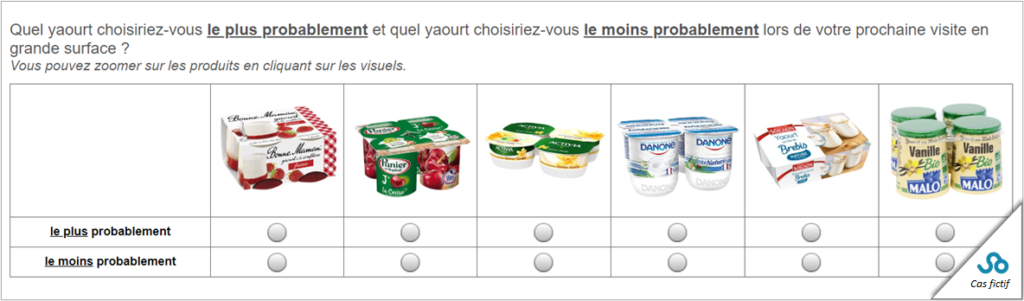

Example choice task #1 (products ranking)

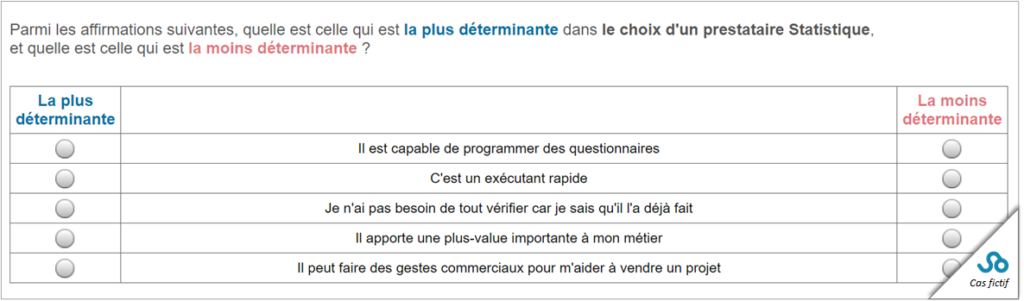

Example choice task #2 (claims ranking)

Score estimates

Estimating utility values at the end of fieldwork

Similar to a CBC, the calculation of scores at the time of data processing is carried out by using the hierarchical Bayesian algorithm, which makes it possible to obtain results (utility values) individual-by-individual.

Various parameters are estimated at individual level by means of an iterative process which takes into account both the choice of each of the individuals as well as the overall distribution of these choices. Estimates at individual level improve the precision of the measured importance.

Estimating utility values during fieldwork

Advantage: It is possible to expand on a MaxDiff task by dynamically asking specific questions in relation to the observed classification of a given respondent. For example, you can have a list of 15 products evaluated, while asking a series of specific questions about the “preferred” product in the same questionnaire (or about the TOP 3 for example).

Disadvantage: the calculation method is simplified compared to the calculation presented previously (because the design only takes into account individual responses at time T). The precision of the calculated score is lower because there is no “correction” made according to the responses of the entire sample. We do keep the individual calculated scores at the end of fieldwork, because the overall estimate could contradict the individual classification defined throughout the task.

The MaxDiff analysis makes it possible to obtain utility scores for each individual as well as for every other alternative proposal evaluated.

Complementary statistical analyses

When it is appropriate and according to your needs and objectives it is possible to carry out secondary analyses.

Typology

Solirem is able to categorize or split up the scores from MaxDiff’s basic interpretation. This allows us to obtain information on which alternatives work together, and those which do not. This analysis represents a mapping of various consumer preferences of certain ‘packages’.

TURF

TURF analysis (Totally Unduplicated Reach and Frequency) can be applied to MaxDiff’s groundwork, transforming the calculated utility values into choice probabilities. This analysis makes it possible to develop different hypotheses for optimum ‘packages’, ie. selecting product references which should appear together (in a department, in a catalog, etc.).

In order to exploit the potential of Turf in a thorough and independent manner, we have developed a specific simulator to enable you to unlock the full educational potential of MaxDiff.